Down beneath everything we know lies another world. A world where nothing is forever, nothing is impossible, and nothing is certain. This is the quantum realm, where atoms and electrons are best represented as time-varying functions instead of discrete values. If we can wrap our brains around its very different rules, there’s a lot of potential there, and certainly plenty of room for development.

Modern computers store and read out computations as the orientation of the magnetic field on a region of the hard disk – whether it’s south to north or north to south indicates whether it should be read as a 1 or a 0. As we seek to cram more processing power in a smaller and smaller space, the number of atoms contained in each region drops, until we aim to represent each bit as a single atom. At this level 1s or 0s would be encoded as quantum properties of each atom, with all the weirdness that implies. Bits encoded in this way would be quantum bits, or qubits, and need to be handled appropriately.

Complex evolution

Quantum systems evolve according to a complex function called the wave function. This describes the system in a probabilistic way, taking both space and time as variables. A fundamental axiom of quantum is that, until you interfere with the system by taking a measurement, you cannot describe it more precisely. Once you disturb it, you know how it was when the measurement was taken, but not how it is evolving now. This restricts you to know the state of a system, or how it changes with time, but not both. That’s Heisenberg’s uncertainty principle.

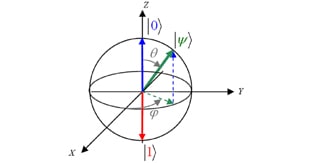

This theoretical basis has very practical implications for building a computer on a quantum scale. Unlike a traditional bit, which is definitively 0 or 1, a qubit can be a linear combination of the mode representing 0 and the mode representing 1. The complex coefficients of each of these terms give their relative probability of occurring when a measurement is made. But it is impossible to know which one will be the final state until the measurement is taken.

Poking at black boxes

However, there are certain processes which can be performed on a qubit which do not interfere with its evolution. A quantum computer would be built out of these processes in the same way that regular computers are composed of logic gates. The first example of how to use these sort of processes to solve a task a classical computer cannot do was given by David Deutsch.

Say we have a black box which performs a function f on n bits, and for each one outputs 0 or 1 as the final value or output. If the output is the same no matter what the input, f is a constant function. If the outputs are 0 for half the bits and 1 for the other half, f is a balanced function. Classically, to find whether f is constant or balanced would require running f twice, once for each possible input. But using quantum computation techniques, we can find this with only one input – by inputting a superposition of both 0 and 1. It might not be very impressive to save one query, but when f takes a large number of bits as input, the time savings mount up. This was the first formal proof that quantum information theory could break the limits imposed by traditional computation.

Quantum secret breaking

But the most exciting application of quantum computation (or worrying, if you work in computing, finance or defence) is in cryptography. RSA, the standard for encryption which makes electronic communication secure, relies on it being difficult to factorize multiples of large primes. However, of the five or so quantum algorithms that exist at the moment, one does just that. On a classical computer, the best available factorizing algorithm takes a time proportional to the exponential of the number being factored. The Shor algorithm reduces this to a polynomial function of the integer, threatening to break the security of RSA encryption if it can be implemented.

How does the Shor algorithm work? Finding prime factors relies on finding the periodicity, p, of the function f(x) = xa mod N (again, N is the number to be factored). This is done by creating two linked bits of quantum circuitry: one containing a superposition of possibilities for a, and one containing a superposition of the results of trying those possibilities. When the second part is measured, both sides of the system collapse into just one state – on the first side, the specific value of a that ended up being measured, and on the second side the result it produces – let’s call it k. Because f(x) is periodic, there will be many values of a that can produce k, appearing at intervals of p. Performing a quantum Fourier transform on this part of the system boosts the probability of reading out one of these periodic values (now spaced at intervals of 1/p after the FT). A few measurements like this yield a ‘good guess’ for the periodicity of f(x). With a clue like this, a classical computer has a headstart on finding the possible factors of N, and can swiftly unravel the encryption protocol.

The security-conscious shouldn’t start sweating just yet, however, as the largest number which has yet been split into prime factors is the gigantic 15. If the implementation problems can be cracked though, the entirety of electronic communication is on thin ice. And if one quantum algorithm can change that much, what will the next one do?

Related Links

Lectures on Quantum Computation by David Deutsch

A series of lectures designed as an introduction to the quantum theory of computation.